Make your existing solution tastier with serverless salt: distributed system

This article is the third in a series on “serverless.” I recommend starting at the beginning of the series, as I introduce concepts incrementally. The links to previous articles are included here:

- Introduction

- Feasibility

- Distributed system

- ...

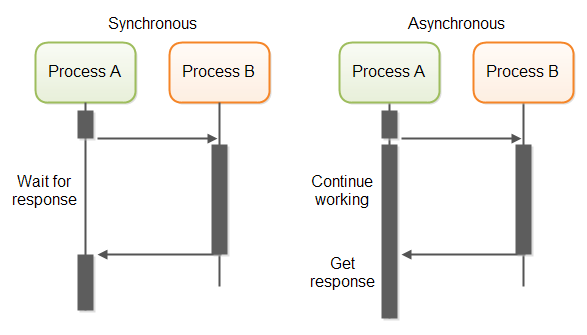

If you have read the previous articles, you know how a serverless function can be synchronously requested from an application. In this article, I’ll better explain the difference between synchronous and asynchronous modes and upgrade the example I gave to be asynchronous. The idea is to better integrate with distributed systems, but as you will see, it comes with some challenges.

Let me start with the definitions first

- Synchronous mode: wait for a request to finish before moving on to another task

- Asynchronous mode: move on to another task before the request is finished

There is no “better” option, it depends on what has to be executed and in what context.

- Synchronous mode is often used for a simple implementation that dramatically reduces the use of the OS process resources (CPU, file system, memory, etc), but it keeps worker/connection resources open for an unpredictably long time

- Asynchronous mode is more often used for more complex implementation (resiliency, design) that keeps worker/connection resources open for a short and predictable time

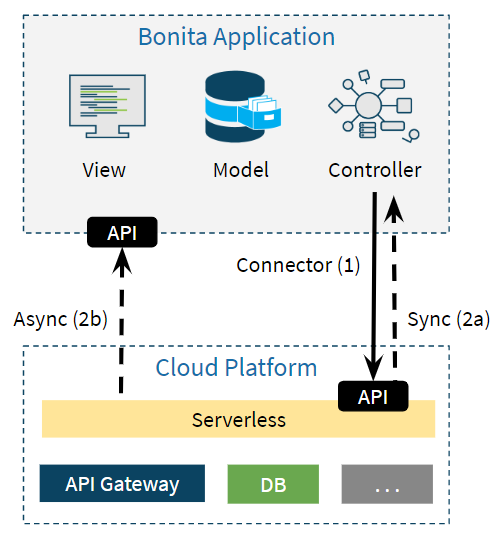

In my example of the Bonita platform and AWS Lambda, we can use both request modes.

When the asynchronous request mode is used, the AWS Lambda function needs to send a callback to the Bonita application when the execution is over. Technically speaking, this requires a few upgrades for the Bonita platform and AWS Lambda function:

- Bonita Callback API: accept callback and trigger related event(s) internally

- Bonita Connector: invoke request asynchronously with callback information

- AWS Lambda function: callback Bonita at the end of the execution

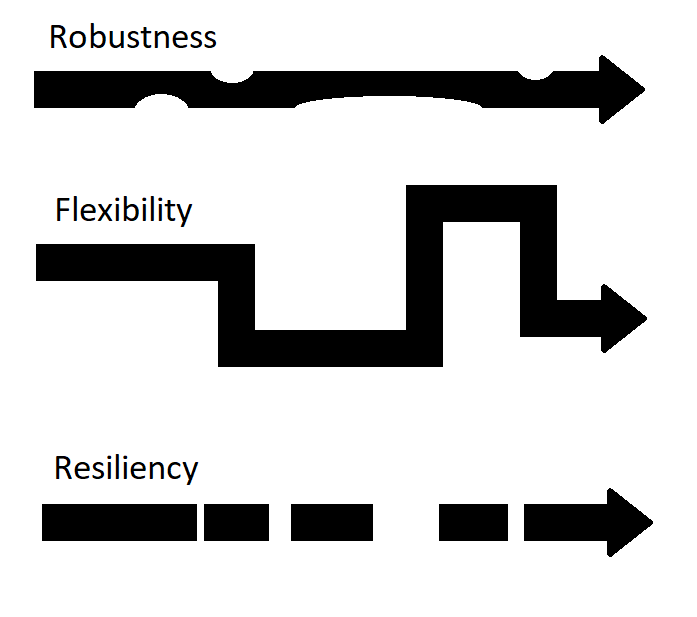

With this advanced distribution of execution, things can go wrong at many levels: network latency anyone? This leads to yet another challenge - that is, to make sure the Bonita application and the AWS Lambda function are not only robust and flexible but also resilient.

- Robustness: how much a system can take before failing

- Flexibility: how much a system can be adapted live

- Resiliency: how much disruption a system can take

To give you a better idea of what resiliency is: note that a typical design includes management of duplicated calls, retries, timeouts, events, circuit breakers, etc.

Now we have reviewed the basics, let me show you what I did to upgrade the application!

As in the previous article, you can follow along in detail with the development resources I shared as a single archive file named “level2-1.0.zip” in the release “level2-1.0” of a dedicated GitHub project. The “Serverless_Level2-1.0.bos” BOS file can be imported in any 7.7.4 or higher version of the Bonita Studio.

Bonita Callback API

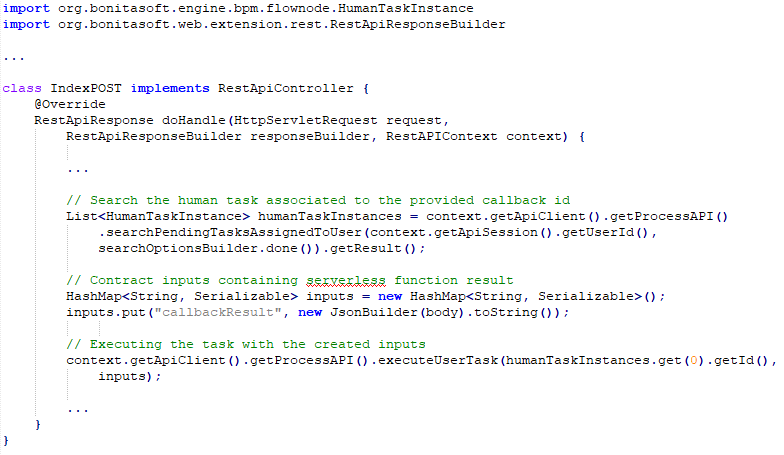

There is no callback API by default in the version 7.7.4 of Bonita, so I can create a Bonita REST API Extension named “callback” to do the job:

- Access: any logged Bonita user

- Method: POST

- URL: /bonita/API/extension/callback

- Payload: JSON object with “id” as a mandatory attribute (unique callback ID)

- Execution: search and execute any human task associated with the provided unique callback ID

A system of retries has been added for more resiliency. It addresses the corner case where the human task takes more time to be created than the callback request takes to be called. You can find the complete source in the “callback.zip” file of the ”level2-1.0.zip” archive file.

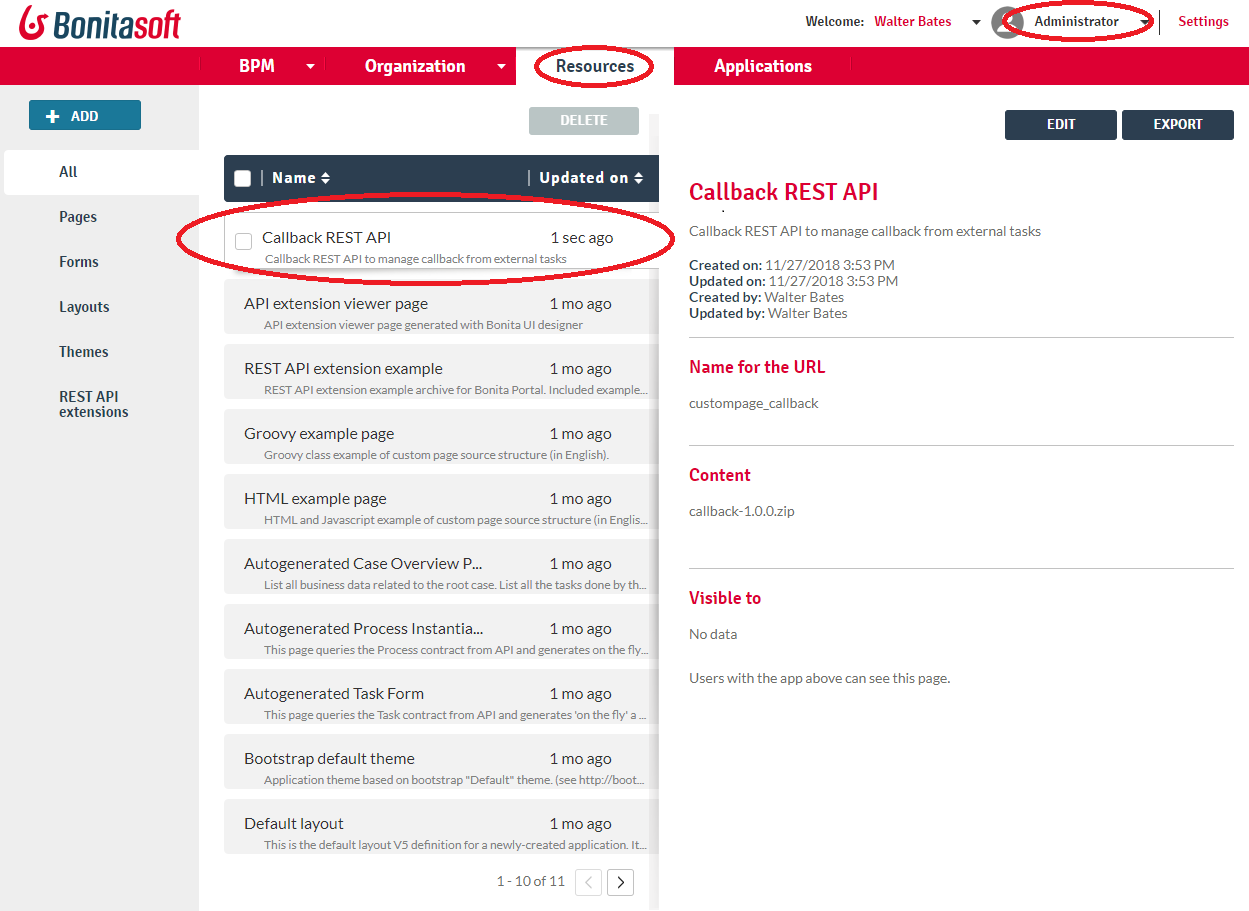

Once implemented, I deploy the “callback” REST API Extension on the Bonita runtime. You can find the compiled artifact “callback-1.0.0.zip” in the ”level2-1.0.zip” archive file.

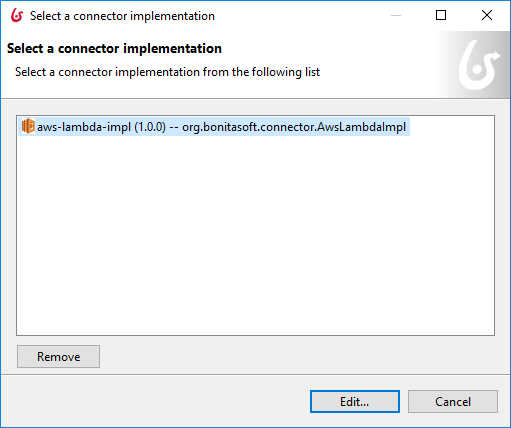

Bonita Connector

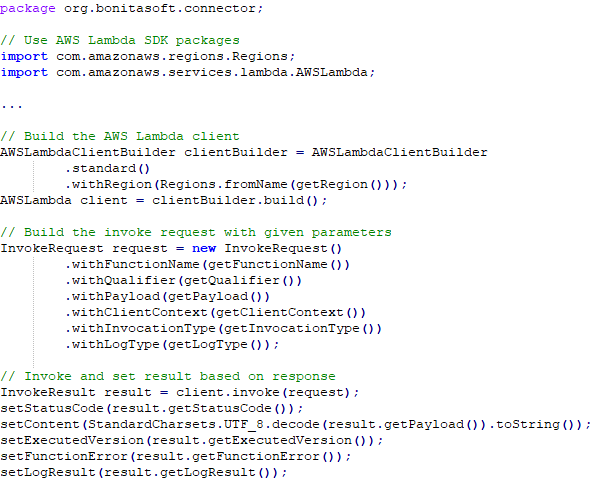

The connector implementation itself does not change because it is flexible enough. It still uses the AWS Lambda Java SDK to instantiate a client, build and invoke the request based on connector inputs, and set the connector outputs with the response.

What really changes is the invocation itself:

- Use “Event” (asynchronous) instead of default (synchronous) for the “Invocation Type” input

- Generate the callback URL based on a unique callback ID and provide both in the “Payload” input

Another major change is the response. The normal status code is 302 instead of 200: it confirms that the request has been accepted by AWS Lambda but without any guarantee that it will be executed successfully. Only a callback can confirm the execution result.

All these design changes are taken into account in the upgraded version of the Bonita application as explained later in this article. If you want to check the implementation in detail, import the BOS file into Bonita Studio and check the “aws-lambda-impl” connector implementation.

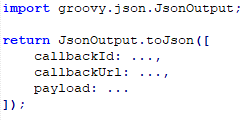

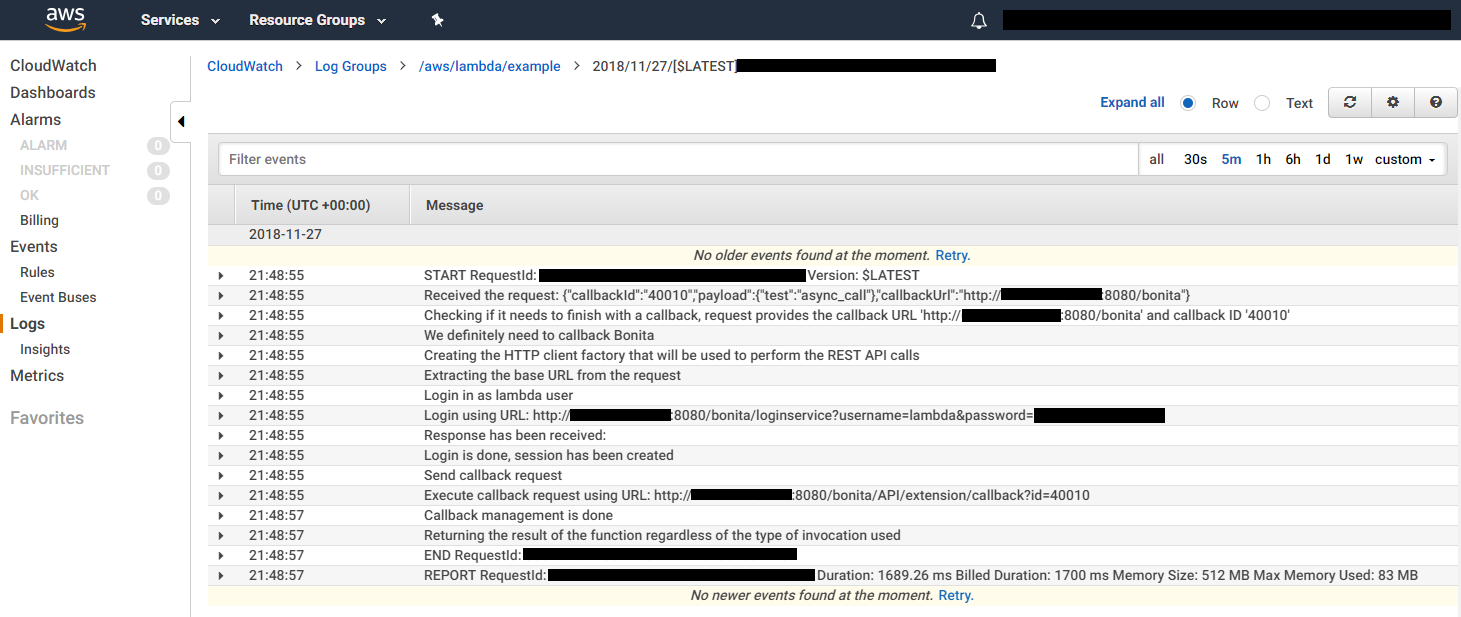

AWS Lambda function

The necessary upgrade is to callback Bonita if there is callback information provided in the request payload right before the end of the function. The callback is a sequence of two calls:

- Login programmatically with the dedicated Bonita user

- Request the provided callback URL with the result in the payload and a valid token in the cookies (given in the login response)

Add some logs and it runs like a charm.

You can find the complete source in the “aws_lambda_example.zip” file, and the compiled JAR in the ”level2-1.0.zip” archive file. (Check the previous article if you need more information about how to deploy it on AWS Lambda - it is the exact same thing.)

For security purposes, the Bonita user credentials used to login should be encrypted. It is not the case here but this could be done with AWS Secret Manager for example.

Also, a fair amount of resilience would be nice to have, like:

- Provide useful logs in the callback payload

- Ensure a callback is done even in the case of a function execution failure

- Retry if the Bonita service cannot be reached

- Do not execute twice the same job even if multiple requests are received

Bonita Application

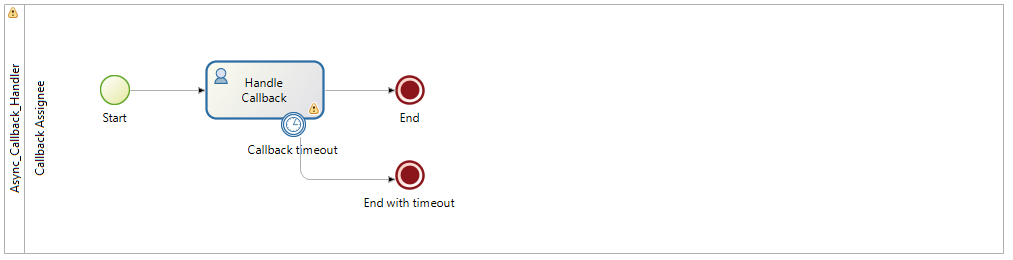

Generic callback process

I define a brand new process to introduce some resiliency (here I use a timeout, but it could be much more) while waiting for an expected callback to make it usable for any asynchronous request integration.

This process is not designed to be started by a human but rather only programmatically by other processes. It expects some input parameters to start a new instance

- callbackId: the unique callback ID to wait for

- callbackTimeout: how much time to wait before returning with an error

- callbackAssigneeId: the ID of the user who can do the callback (likely a technical user used by an external service)

And it returns the callback result if any.

The wait is designed through a human task that is executed by the “callback” REST API Extension when a callback is received with the right callback ID. This task can only be executed by the given assignee (Lambda in our example) and requires a value for the “callbackResult” input.

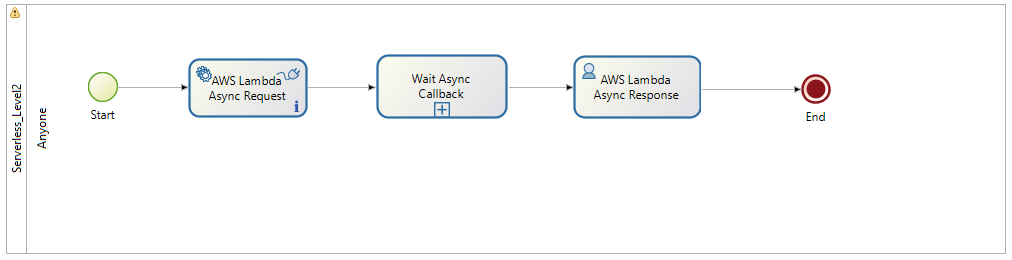

Main process

It now contains more tasks

- AWS Lambda Async Request: automatic task to make the asynchronous invocation of the AWS Lambda function using the connector with the right configuration

- Wait Async Callback: instantiate the generic callback process with the callback parameters and store the result for further use

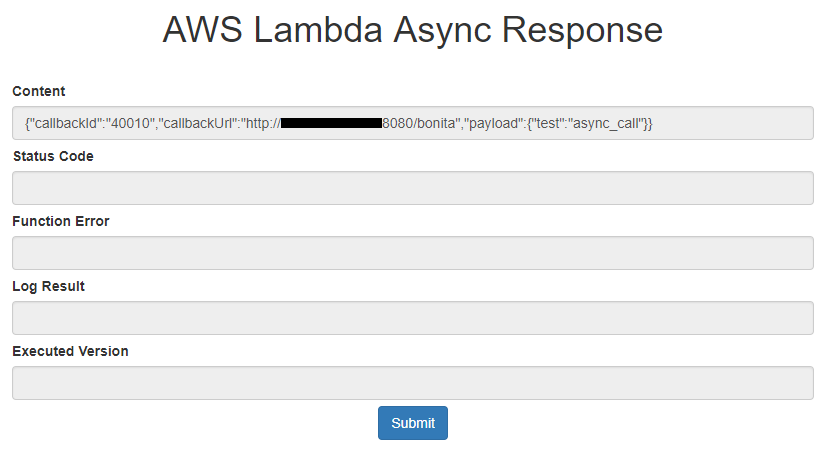

- AWS Lambda Async Response: display a form with the stored result to the end-user

And parameters

- aws_callbackUrl: the Bonita application URL

- aws_timeout: callback timeout used for the generic callback process call

If you want to see more detail, import the BOS file into Bonita Studio and open the diagrams.

Make it run

A new instance of the main process, with valid parameters and the task, displays the asynchronous request payload as expected a few seconds after.

This demonstrates pretty well that it is not that complicated to integrate serverless function in an application. As is often the case with distributed systems, the pain comes with an enterprise-grade solution. Depending on the applications you build and libraries or platforms you use, it can be super simple or quite complex to achieve. On the Bonita side, the platform is evolving to integrate more and more features to make such integration as seamless as possible. A great example is the brand new REST API that comes with the 7.8 for this purpose.

The next article of this series will focus on abstracting the administration of a serverless function when integrated with an application, so check for updates!

I would appreciate your feedback in the comments: enhancements, new topics to cover, etc. If you like what you read, let us know and we will spread the word!